Background

When I started working with Salesforce way back in 2008, I had a natural

affinity for the Apex programming language, as I'd spent the previous decade

working with Object Oriented languages - first C++, then 8 years or so with

Java. Visualforce was also a very easy transition, as I had spent a lot of

time building custom front ends using Java technologies - servlets first

before moving on to JavaServer Pages (now

Jakarta Server Pages), which had a huge amount of overlap with the Visualforce custom tag

approach.

One area where I didn't have a huge amount of experience was JavaScript. Oddly

I had a few years experience with server side JavaScript due to maintaining

and extending the

OpenMarket TRANSACT

product, but that was mostly small tweaks added to existing functionality, and

nothing that required me to learn much about the language itself, such as it

was back then.

I occasionally used JavaScript in Visualforce to do things like

refreshing a record detail from an embedded Visualforce page,

Onload Handling

or

Dojo Charts. All of these had something in common though, they were snippets of

JavaScript that were rendered by Visualforce markup, including the data that

they operated on. There was no connection with the server, or any kind of

business logic worthy of the name - everything was figured out server

side.

Then came

JavaScript Remoting, which I used relatively infrequently for pure Visualforce, as I didn't

particularly like striping the business logic across the controller and the

front end, until the

Salesforce1 mobile app

came along. Using Visualforce, with it's server round trips and re-rendering

of large chunks of the page suddenly felt clunky compared to doing as much as

possible on the device, and I was seized with the zeal of the newly converted.

I'm pretty sure my JavaScript still looked like Apex code that had been

through some automatic translation process, as I was still getting to grips

with the JavaScript language, much of which was simply baffling to my server

side conditioned eyes.

It wasn't long before I was looking at

jQuery Mobile to

produce

Single Page Applications

where maintaining state is entirely the job of the front end, which quickly

led me to Knockout.js as I could use

bindings again, rather than having to manually update elements when data

changed. This period culminated my Dreamforce 2013 session on

Mobilizing your Visualforce Application with jQuery Mobile and

Knockout.js.

Then in 2015,

Lightning Components (now Aura Components)

came along, where suddenly JavaScript got real. Rather than rendering via

Visualforce or including from a static resource, my pages were assembled from

re-usable JavaScript components. While Aura didn't exactly encourage it's

developers down the modern JavaScript route, it's successor -

Lightning Web Components

- certainly did.

All this is rather a lengthy introduction to the purpose of this series of

blogs, which are intended to (try to) explain some of the differences and

challenges when moving to JavaScript from an Apex background. This isn't a JavaScript tutorial, it's more about what I wish I'd known when I started. It's also based on my

experience, which as you can see from above, was a somewhat

meandering path. Anyone

starting their journey should find it a lot more straightforward now, but

there's still plenty there to baffle!

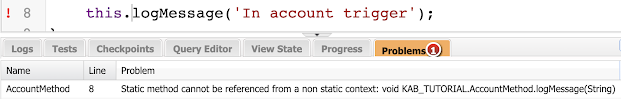

Strong versus Weak (Loose) Typing

The first challenge I encountered with JavaScript was the difference in

typing.

Apex

Apex is a strongly typed language, where every variable is declared with the

type of data that it can store, and that cannot change during the life of the

variable.

Date dealDate;

In the line above, dealDate is

declared of type Date, and can only

store dates. Attempts to assign it

DateTime or

Boolean values explicitly will

cause compiler errors:

dealDate=true; // Illegal assignment from Boolean to Date

dealDate=System.now(); // Illegal assignment from DateTime to Date

while attempts to assign something that might be a

Date, but turns out not to be at

runtime will throw an exception:

Object candidate=System.now();

dealDate=(Date) candidate; // System.TypeException: Invalid conversion from runtime type Datetime to

Date

JavaScript

JavaScript is a weakly typed language, where values have types but variables

don't. You simply declare a variable using var or let, then assign

whatever you want to it, changing the type as you need to:

let dealDate;

dealDate=2; // dealDate is now a number

dealDate='Yesterday'; // dealDate is now a string

dealDate=Date(); // dealDate is now a date

The JavaScript interpreter assumes that you are happy with the value you have

assigned the variable and will use it appropriately. If you use it

inappropriately, this will sometimes be picked up at runtime and a TypeError

thrown. For example, attempting to run the

toUpperCase() string method on a

number primitive:

let val=1;

val.toUpperCase();

Uncaught TypeError: val.toUpperCase is not a function

However, as long as the way you are attempting to use the variable is legal,

inappropriate usage often just gives you an unexpected result. Take the

following, based on a simplified example of something I've done a number of

times - I have an array and I want to find the position of the value 3.

let numArray=[1,2,3,4];

numArray.indexOf[3];

which returns undefined, rather than the expected position of 2.

Did you spot the error? I used the square bracket notation instead of round

brackets to demarcate the parameter. So instead of executing the indexOf

function, JavaScript was quite happy to treat the function as an array and

return me the third element, which doesn't exist.

JavaScript also does a lot more automatic conversion of types,

making assumptions that might not be obvious. To use the

+ operator as an example, this can

mean concatenation for strings or addition for numbers, so there are a few

decisions to be made:

lhs + rhs

1. If either of lhs/rhs

is an object, it is converted to a primitive string, number or boolean

2. If either of lhs/rhs

is string primitive, the other is converted to a string (if necessary) and

they are concatenated

3. lhs and

rhs are converted to numbers (if

necessary) and they are added

Which sounds perfectly reasonable in theory, but can surprise you in practice:

1 + true

result: 2, true is converted to the number 1

5 + '4'

result '54', rhs is a string so 5 is converted to a string and

concatenated.

false + 2

result: 2, false is converted to the number 0

5 + 3 + '5'

result '85' - 5 + 3 adds the two numbers to give 8, which is then

converted to a string to concatenate with '5'

[1992, 2015, 2021] + 9

result '1992,2015,20219' - lhs is an object (array) which is

converted to a primitive using the toString method, giving the string

'1992,2015,2021', 9 is converted to the string '9' and the two strings are

concatenated

Which is Better?

Is this my first rodeo? We can't even agree on what strong and weak typing really mean, so deciding whether one is preferred over the other is an impossible task. In this case it doesn't matter, as Apex and JavaScript aren't going to change!

Strongly typed languages are generally considered safer, especially for

beginners, as more errors are trapped at compile time. There may also

be some performance benefits as you have made guarantees to the compiler that

it can use when applying optimisation, but this is getting harder to quantify

and in reality is unlikely to be a major performance factor in any code that

you write.

Weakly typed languages are typically more concise, and the ability to pass any type as a parameter to a function can be really useful when building things like loggers.

Personally I take the view that code is written for computers but read by humans, so anything that clarifies intent is good. If I don't have strong typing, I'll choose a naming convention that makes the type of my variables clear, and I'll avoid re-using variables to hold different types even if the language allows me to.