| Tweet |

SFDX and the Metadata API Part 3 - Destructive Changes

Introduction

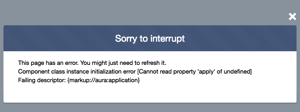

In Part 1 of this series, I covered how you can use the SFDX command line tool to deploy metadata to a regular (non-scratch) Salesforce org, including checking the status and receiving the results of the deployment in JSON format. In Part 2, how to combine the deploy and check into a node script that shows the progress of the deployment.

Creating metadata is only part of the story when implementing Salesforce or building an application on the platform. Unless you have supernatural prescience, it’s likely you’ll need to remove a component or two as time goes by. While items can be manually deleted, that’s not an approach that scales and you are also likely to get through a lot of developers when they realise their job consists of replicating the same manual change across a bunch of instances!

It’s just another deployment

Destroying components in Salesforce is accomplished in the same way as creating them - via a metadata deployment, but with a couple of key differences.

Empty Package Manifest

The package.xml file for destructive changes is simply an empty variant that only contains the version of the API being targeted:

<?xml version="1.0" encoding="UTF-8"?>

<Package xmlns="http://soap.sforce.com/2006/04/metadata">

<version>41.0</version>

</Package>

Destructive Changes Manifest

The destructiveChanges.xml file identifies the components to be destroyed - it’s the same format as any other package.xml file, but doesn’t support wildcards so you need to know the name of everything you want to deep six. Continuing the theme in this series of using my Take a Moment blog post as the source of examples, here’s the destructive changes manifest to remove the component:

<?xml version="1.0" encoding="UTF-8" standalone="yes"?>

<Package xmlns="http://soap.sforce.com/2006/04/metadata">

<types>

<members>TakeAMoment</members>

<name>AuraDefinitionBundle</name>

</types>

<version>40.0</version>

</Package>

Destroy Mode Engaged

For the purposes of this post I’ve created a directory named destructive and placed the two manifest files in there. I can then execute the following command to remove the app from my dev org.

> sfdx force:mdapi:deploy -d destructive -u keirbowden@googlemail.com -w -1

Note that I’ve specified the -w switch with a value of -1, which means the command will poll the Salesforce server until it completes. As this is a deployment it can also be handled by a node script in the same way that I demonstrated in Part 2 of this series.

The output of the command is as follows:

619 bytes written to /var/folders/tn/q5mzq6n53blbszymdmtqkflc0000gs/T/destructive.zip using 25.393ms Deploying /var/folders/tn/q5mzq6n53blbszymdmtqkflc0000gs/T/destructive.zip... === Status Status: Pending jobid: 0Af80000003zYCfCAM Component errors: 0 Components deployed: 0 Components total: 0 Tests errors: 0 Tests completed: 0 Tests total: 0 Check only: false Deployment finished in 2000ms === Result Status: Succeeded jobid: 0Af80000003zYCfCAM Completed: 2017-12-24T17:14:02.000Z Component errors: 0 Components deployed: 1 Components total: 1 Tests errors: 0 Tests completed: 0 Tests total: 0 Check only: false

and the component is gone from my org. If I’ve deployed it to a bunch of other orgs, I just need to re-run the command with the appropriate -u switch.

Related Posts

- SFDX and the Metadata API Part 2 - Scripting

- SFDX and the Metadata API (Part 1 of this series)

- Selenium and SalesforceDX Scratch Orgs

- SalesforceDX Week 1

- SalesforceDX - View from the coal face

- SalesforceDX official site